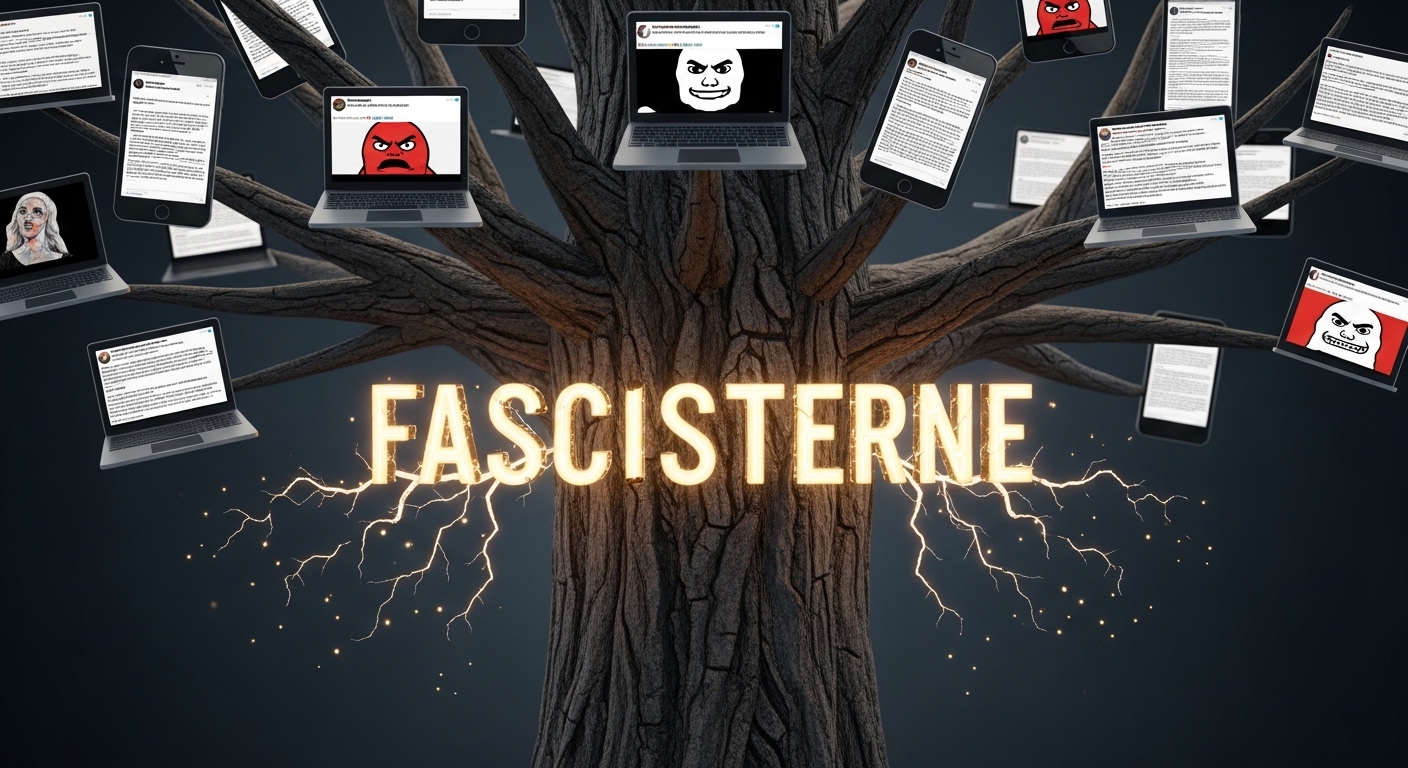

Fascism is making a disturbing comeback in the digital age. What was once relegated to the annals of history has found new life, fueled by social media platforms that enable extremist ideologies to flourish. The rise of fascisterne—those who promote these divisive beliefs—is alarming. With just a few clicks, individuals can connect with like-minded extremists and share propaganda at unprecedented speeds.

Social media isn’t just a tool for communication; it’s become an incubator for radical ideas, garnering attention from those seeking community and validation. In this blog post, we will explore how digital platforms have transformed the landscape of extremism, fascisterne examining both the tactics used by fascists and the responses from tech companies trying to stem this tide. The stakes are high as we navigate through algorithms designed to keep us hooked while inadvertently promoting hateful content.

Join us as we delve into this pressing issue that affects not only our online spaces but also shapes societal attitudes towards diversity and inclusion.

The role of social media in spreading extremist ideologies

Social media has become a powerful tool for spreading extremist ideologies, including fascism. Platforms like Facebook, Twitter, and Instagram allow individuals and groups to share their beliefs with unprecedented reach.

These sites enable the rapid dissemination of propaganda. A single post can go viral, attracting followers who may not have encountered such views otherwise. This creates an environment where radical ideas thrive.

Moreover, social media facilitates connections between like-minded individuals across geographical boundaries. Users can find communities that validate their beliefs and intensify their commitment to these ideologies.

The anonymity provided by online platforms also emboldens users to express extreme opinions without fear of immediate repercussions. This lack of accountability further fuels the spread of hateful rhetoric and divisive narratives.

As discussions around freedom of speech continue, the challenge lies in balancing open dialogue with protecting society from harmful extremism propagated through digital means.

Case studies of how fascists have used social media to recruit and radicalize individuals

Fascisterne have skillfully exploited social media to attract and radicalize individuals. One chilling example is the recruitment tactics employed by white supremacist groups on platforms like Facebook and Twitter. They create seemingly innocuous pages that gradually introduce extremist content.

These pages often start with discussions around common grievances, allowing users to feel a sense of belonging. As engagement grows, more explicit propaganda emerges, drawing in those who might feel disenfranchised.

Another case involves Telegram channels where fascists share encrypted messages and memes filled with hate speech. These spaces foster tight-knit communities, isolating users from opposing perspectives while reinforcing extreme ideologies.

Moreover, live-streaming events have become a disturbing trend among these groups. By broadcasting rallies or violent acts online, they reach wider audiences, glorifying their actions and inspiring others to join their cause.

The impact of algorithms and echo chambers on promoting extremist content

Algorithms shape what we see online. They prioritize content that engages, often leading us down paths of extreme ideologies. This can create echo chambers where only like-minded voices resonate.

In these digital spaces, users are bombarded with extremist messages tailored to their interests. The more they engage, the more radical content is fed to them. It’s a cycle that reinforces beliefs and isolates individuals from differing perspectives.

These algorithms don’t just promote divisive ideas; they normalize them. What starts as curiosity can spiral into full-fledged radicalization as users become entrenched in extremist communities.

The design of social media platforms contributes significantly to this phenomenon. Instead of fostering dialogue, they often amplify hate and division, leaving little room for constructive discourse or understanding among diverse viewpoints.

Efforts by social media companies to combat extremism

Social media companies have taken various steps to combat extremism. Many platforms have implemented stricter content moderation policies. They aim to detect and remove hate speech and extremist propaganda swiftly.

Artificial intelligence plays a crucial role in these efforts. Algorithms are developed to identify harmful content before it spreads widely. However, challenges remain as extremists constantly find new ways to circumvent these measures.

Partnerships with organizations specializing in countering violent extremism have also been established. These collaborations help create educational resources designed to debunk misinformation and foster critical thinking among users.

Moreover, some companies promote positive narratives that challenge extremist views. This proactive approach seeks to create an online environment less conducive to radicalization.

Despite these initiatives, critics argue that social media giants often fall short of their promises. The balance between free expression and preventing harm is a complex issue that continues to spark debate across the digital landscape.

Criticisms of these efforts and calls for stricter regulations

Critics argue that current measures taken by social media companies are insufficient to combat the spread of fascist ideologies. The voluntary nature of these efforts often leads to inconsistent enforcement and a lack of transparency.

Many believe that self-regulation is not enough. They call for stricter government regulations to hold platforms accountable for enabling extremist content. Without clear guidelines, harmful narratives continue to thrive online.

Moreover, critics highlight that algorithm-driven recommendations frequently amplify hate speech rather than suppress it. This creates an environment where dangerous ideas can flourish unchecked.

Advocates for reform suggest increased oversight and collaboration between tech companies and lawmakers. They emphasize the importance of creating a safer digital space free from radicalization tactics employed by groups like Fascisterne.

The conversation around accountability in the digital world has gained momentum, pushing society toward meaningful change in how we address online extremism.

The need for greater responsibility and accountability in the digital world to prevent the spread of

As digital landscapes evolve, the responsibility of platforms grows. Social media giants must take ownership of their role in shaping discourse. The unchecked spread of fascist ideologies online poses direct threats to society.

Users deserve safe spaces free from hate and extremism. It’s crucial for companies to implement rigorous content moderation policies. This not only protects vulnerable individuals but also fosters a healthier online environment.

Transparency is another vital aspect. Users should understand how content is recommended and curated. Clear guidelines can deter extremist narratives from taking root.

Moreover, collaboration with experts in psychology and sociology could enhance strategies against radicalization. By prioritizing research-informed approaches, platforms can better address the complexities of user behavior.

Greater accountability means facing consequences when failing to protect communities from harm. Emphasizing ethical practices will help create a more inclusive digital world while minimizing risks associated with fascisterne propagation.

Conclusion

The rise of fascism and extremism in recent years has been alarming. As digital platforms continue to evolve, they inadvertently create spaces where extremist ideologies can flourish. The role social media plays in this phenomenon cannot be overlooked.

Fascisterne have adeptly harnessed the power of these platforms to spread their messages far and wide. Through tailored content that appeals to disillusioned individuals, they manage to recruit and radicalize with ease. Case studies reveal a disturbing trend: social media is often the first point of contact for those susceptible to these ideologies.

Algorithms designed to enhance user engagement contribute significantly to this issue. They promote content that aligns with users’ existing beliefs, creating echo chambers that amplify extremist narratives. This cycle makes it increasingly difficult for users exposed only to such content to encounter opposing viewpoints.

Despite recognizing these challenges, efforts by social media companies remain inconsistent at best. While some initiatives aim at combating hate speech and misinformation, critics argue they’re not enough. Calls for stricter regulations are growing louder as communities demand accountability from tech giants.

As society grapples with the implications of unchecked extremism online, it’s evident that more must be done. Greater responsibility lies on digital platforms if we hope to curb the influence of groups like fascisterne while fostering an inclusive environment free from hate.